In the past few years, Kubernetes has become the standard method to orchestrate containers. Let’s delve into an introduction to Kubernetes architecture and its components. You’ll understand the basics of Kubernetes and be ready to learn more by the end of this article.

Table of Contents

What is Kubernetes?

Kubernetes, often abbreviated as k8s, is an open-source container orchestration platform originally developed by Google. It automates the deployment, scaling, and management of containerized applications, allowing developers to focus on writing code without worrying about the underlying infrastructure.

Why use Kubernetes?

Kubernetes offers numerous benefits, including:

- Improved application scalability and availability

- Efficient resource utilization

- Easy deployment and rollback of applications

- Self-healing capabilities

- Extensibility and a thriving ecosystem

Kubernetes Architecture

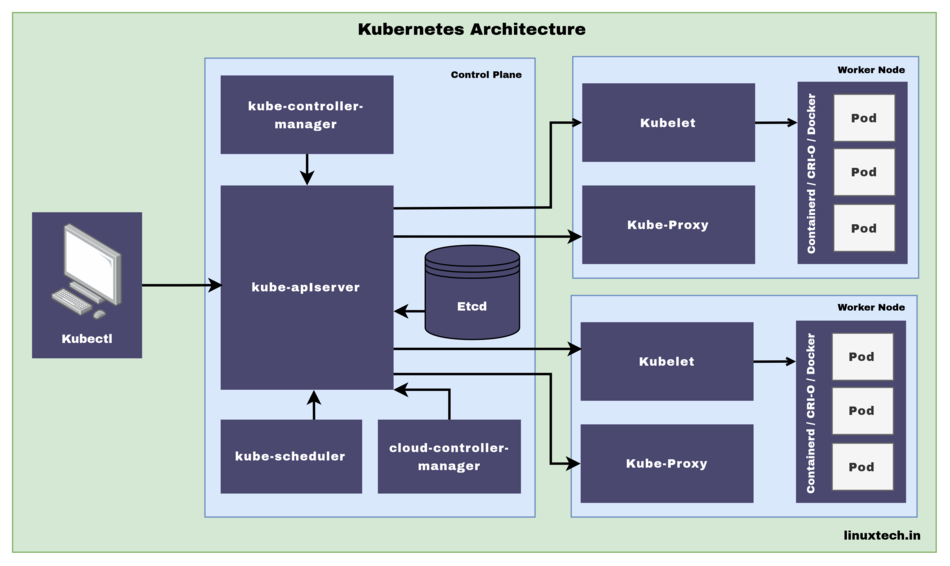

Kubernetes follows a client-server architecture, with two main components: the Control Plane and the Worker Nodes.

Control Plane

The Control Plane is the set of components that manage the overall state of the cluster. These components include:

kube-apiserver

The Kubernetes API server acts as the frontend for the control plane. It exposes the Kubernetes API and processes RESTful requests to manage the cluster state.

kube-controller-manager

Manages different non-terminating control loops that regulate the state of the cluster, such as the replication controller, endpoints controller, Node controller, Job controller, EndpointSlice controller and namespace controller.

etcd

A distributed key-value store that stores the configuration data of the Kubernetes cluster, providing a reliable way to store data across a cluster of machines.

kube-scheduler

Responsible for placing Pods on the most suitable Worker Nodes based on resource requirements, quality of service, policies, and other factors.

cloud-controller-manager

The Kubernetes component that runs controller loops to manage cloud provider-specific resources through their respective APIs. It enables better separation of concerns between Kubernetes and the underlying cloud infrastructure.

Worker Nodes

Worker Nodes, previously called Minions, are responsible for running containerized applications. They have several components:

Container Runtime

The software responsible for running containers, such as containerd, CRI-O or Docker.

Kubelet

An agent that runs on each worker node, ensuring containers are running as expected in a Pod.

Kube-proxy

A network proxy that runs on each worker node, maintaining network rules and enabling communication between Pods and Services within or outside the cluster.

Kubernetes Components

Kubernetes introduces several abstractions and objects that make it easier to manage containerized applications, including:

Pods

The smallest and simplest unit in Kubernetes, a Pod represents a single instance of a running process in a cluster. Pods can contain one or more containers.

Services

A stable way to expose applications running in Pods to the network, either within the cluster or externally. Services provide load balancing, service discovery, and a stable IP address.

Deployments

A higher-level abstraction that describes the desired state of an application. Deployments manage the creation, scaling, and updating of Pods, ensuring the desired number of replicas are running at all times.

ReplicaSets

Ensures the desired number of Pod replicas are running at any given time. ReplicaSets are managed by Deployments and shouldn’t be used directly.

Volume

A volume is a way to provide persistent storage for the pods. It enables the pods to store data that persists across restarts and rescheduling.

ConfigMap and Secret

ConfigMaps and Secrets are used to manage the configuration data and secrets that are used by the pods. They provide a centralized and secure way to manage the configuration data and secrets.

Conclusion

This introduction to Kubernetes provides an overview of the architecture and core components that make up this powerful container orchestration platform. As you progress in your learning journey, you’ll discover many more advanced features and concepts that will help you effectively manage and scale containerized applications.

In the next part of our Kubernetes learning series, we’ll cover setting up a Kubernetes cluster and installing essential tools to get you started on your path to Kubernetes mastery.

Got any queries or feedback? Feel free to drop a comment below!